Utility Meter Data Analytics on AWS

Partner Solution Deployment Guide

February 2023

Sascha Janssen, Tony Bulding, John Mousa, Flora Eggers, Michael Litz, and Michael Hamilton, Amazon Web Services

| Refer to the GitHub repository to view source files, report bugs, submit feature ideas, and post feedback about this Partner Solution. To comment on the documentation, refer to Feedback. |

This Partner Solution was created by Amazon Web Services in collaboration with Amazon Web Services (AWS). Partner Solutions are automated reference deployments that help people deploy popular technologies on AWS according to AWS best practices. If you’re unfamiliar with AWS Partner Solutions, refer to the AWS Partner Solution General Information Guide.

Overview

This guide covers the information you need to deploy Utility Meter Data Analytics to the AWS Cloud.

Costs and licenses

There is no cost to use this Quick Start, but you will be billed for any AWS services or resources that this Quick Start deploys. For more information, refer to the AWS Partner Solution General Information Guide.

Architecture

Deploying this solution with default parameters builds the following MDA environment in the AWS Cloud.

As shown in Figure 1, the solution sets up the following:

-

Amazon Simple Storage Service (Amazon S3) buckets to store the following:

-

Weather and topology data from external databases.

-

Raw meter data.

-

Partitioned data.

-

Processed data from the AWS Step Functions model training workflow.

-

-

AWS Lambda functions to do the following:

-

Load and transform topology data from an external database.

-

Process late-arriving meter data.

-

Obtain API query results of partitioned business data and Amazon SageMaker inferences.

-

-

An AWS Glue crawler to transform meter data from Meter data management (MDM) system and head end system (HES) systems into partitioned data.

-

Amazon EventBridge to process and store late-arriving meter data in the correct partition.

-

AWS Glue Data Catalog for a central catalog of weather, topology, and meter data.

-

Amazon Athena to provide query results of partitioned data.

-

Two AWS Step Functions workflows:

-

Model training to build a machine learning (ML) model using partitioned business data.

-

Batch processing of partitioned business data from the Data Catalog and ML model data for use in forecasting.

-

-

Amazon SageMaker to generate energy usage inferences using the ML model.

-

Amazon API Gateway to manage API queries.

Deployment options

This Quick Start provides one deployment option:

-

Deploy Utility Meter Data Analytics into an existing VPC. This option provisions MDA in your existing AWS infrastructure.

This Partner Solution provides a template for this option. It also lets you configure instance types and MDA settings.

Predeployment steps

Meter data generator and HES simulator

The input adapter regularly pulls data from the HES simulator and prepares the data for further processing. During deployment, you can choose to deploy the meter data generator and HES simulator stacks by choosing ENABLED for the MeterDataGenerator parameter. You also configure the number of meters and the interval between meter reads with the TotalDevices and GenerationInterval parameters, respectively. The default values for these parameters generate 50,000 reads every five minutes.

Deployment steps

-

Sign in to your AWS account, and launch this Partner Solution, as described under Deployment options. The AWS CloudFormation console opens with a prepopulated template.

-

Choose the correct AWS Region, and then choose Next.

-

On the Create stack page, keep the default setting for the template URL, and then choose Next.

-

On the Specify stack details page, change the stack name if needed. Review the parameters for the template. Provide values for the parameters that require input. For all other parameters, review the default settings and customize them as necessary. When you finish reviewing and customizing the parameters, choose Next.

Unless you’re customizing the Partner Solution templates or are instructed otherwise in this guide’s Predeployment section, don’t change the default settings for the following parameters: QSS3BucketName,QSS3BucketRegion, andQSS3KeyPrefix. Changing the values of these parameters will modify code references that point to the Amazon Simple Storage Service (Amazon S3) bucket name and key prefix. For more information, refer to the AWS Partner Solutions Contributor’s Guide. -

On the Configure stack options page, you can specify tags (key-value pairs) for resources in your stack and set advanced options. When you finish, choose Next.

-

On the Review page, review and confirm the template settings. Under Capabilities, select all of the check boxes to acknowledge that the template creates AWS Identity and Access Management (IAM) resources that might require the ability to automatically expand macros.

-

Choose Create stack. The stack takes about 30 minutes to deploy.

-

Monitor the stack’s status, and when the status is CREATE_COMPLETE, the Utility Meter Data Analytics deployment is ready.

-

To view the created resources, choose the Outputs tab.

Postdeployment steps

Set up Amazon Managed Grafana dashboards

This solution includes a set of Amazon Managed Grafana dashboards. These dashboards use Amazon Athena to query data stored in a data lake and depend on several of the optional datasets for full functionality.

| Amazon Managed Grafana supports Security Assertion Markup Language (SAML) and AWS Identity and Access Management (IAM) Identity Center for authentication. If your organization does not have SAML or AWS IAM Identity Center set up, consult your administrator. |

Create a Grafana workspace

-

In the Amazon Managed Grafana console, choose Create workspace.

-

Enter a Workspace Name (for example,

mda_solution). If desired, enter an optional Workspace Description. -

Choose Next.

-

On the Configure Settings page, for Authentication access, select Security Assertion Markup Language (SAML).

-

For Permission type, choose Service managed.

-

Choose Next.

-

On the Service managed permission settings page, for IAM permission access settings, choose Current account.

-

For Data sources and notification channels, select Amazon Athena.

-

Choose Next.

-

Choose Create workspace.

Select SSO users and groups

After creating a Grafana workspace, select the single sign-on (SSO) users and groups you want to give access to it. For more information, refer to Managing user and group access to Amazon Managed Grafana.

To access the workspace, users sign in to the Grafana workspace URL found on Workspaces page in the Amazon Managed Grafana console.

Set up Amazon Athena as a data source in Grafana

Add Amazon Athena as a data source in Amazon Managed Grafana. For more information, refer to Amazon Athena and Data Source Management.

Import dashboards into Amazon Managed Grafana

Upload or paste the contents of dashboard JSON files from the /scripts/assets/grafana folder of the solution’s repository to Amazon Managed Grafana. For more information, refer to Importing a dashboard.

After importing, you may need to verify that the names of dashboard panel data sources match AWS Glue database names. If you receive errors, check panel data sources and variables in dashboard settings. For more information, refer to Adding or editing a panel and Dashboards.

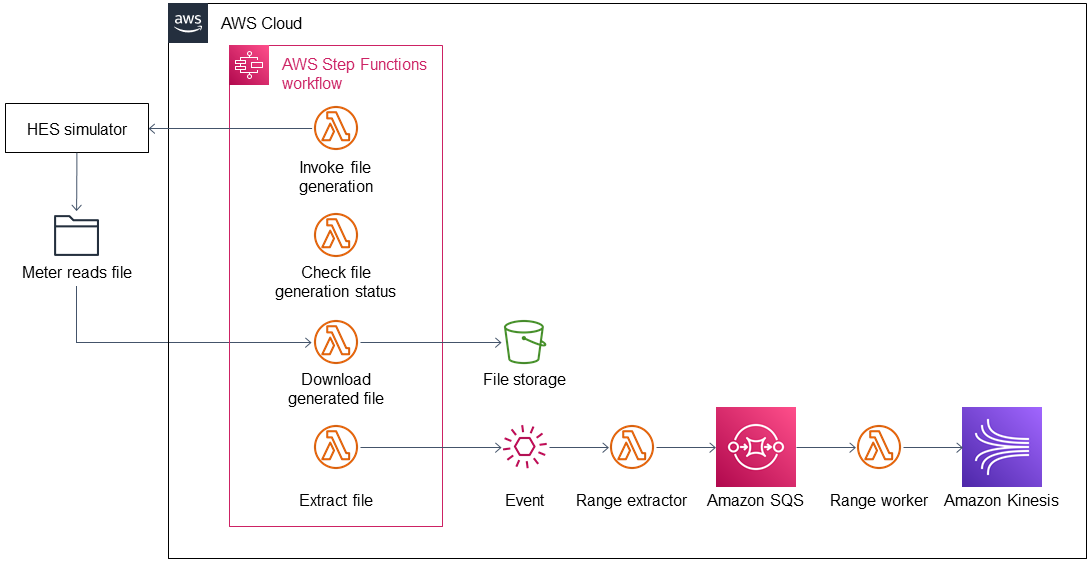

Input adapter

As shown in Figure 2 the solution’s input adapter loads meter reads from an external source (such as HES or FTP) and prepares them for processing.

A step functions workflow orchestrates the generation and download of the meter-reads file as a compressed file from the external database to the inbound bucket. Another process extracts the file from the inbound bucket and stores it in the uncompressed folder. The inbound bucket stores the compressed and uncompressed files. The solution deletes uncompressed files to save storage and costs.

After the file is extracted, the solution generates an event that invokes a Lambda function for further processing. The file-range-extractor Lambda function extracts range information from the uncompressed file based on the file size and number of chunks (configurable). A range is a group of lines that you want to process together. Extracted range information is sent to Amazon Simple Queue Service (Amazon SQS).

Each worker takes a range from the Amazon SQS queue and processes the respective meter reads (parse and transform) before sending each element to Amazon Kinesis. This process ensures that the content input file can be processed in parallel. The worker transforms the CSV line into JSON and creates a separate object for each reading type. The Amazon Kinesis data stream ingests the data into the staging area. The stream scales on demand.

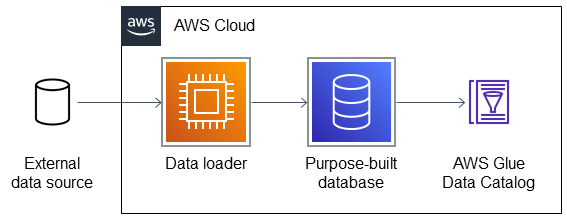

Dataflows

You can set up connections to other data sources by configuring additional dataflows. A dataflow connects to the external database, loads data from it, and stores data in a purpose-built database that can be accessed from the solution’s central Data Catalog.

The solution comes with two sample dataflows: weather and topology. To add a new dataflow, create a data pipeline that loads data from the source, prepares them, and stores results in an appropriate data store. Then add the data store you’ve configured to the solution’s Data Catalog.

Data partitioning

The curated data in the integration stage S3 bucket is partitioned by reading type, year, month, day, and hour, as follows:

s3://IntegrationBucket/reading_type=<reading_type_value>/year=<year>/month=<month>/day=<day>/hour=<hour>/<meter-data-file-in-parquet-format>

You can find all meter reads for the hour of a day on the lowest level of the partition tree. To optimize query performance, the data is stored in a column-based file format (Parquet).

Late-arriving data

The data lake handles late-arriving meter reads. Late-arriving meter reads are detected as soon as the data reaches the staging stage. If a late read is detected, an event is sent to Amazon EventBridge. The ETL pipeline takes care of moving the late read to the correct partition and ensures that data is stored in an optimized way.

Data Formats

Inbound format

The inbound meter-data format is variable and can be adjusted. The following shows the sample inbound data format of the Meter Data Generator:

| Field | Type | Format | Description |

|---|---|---|---|

|

timestamp |

|

Timestamp when the meter read reaches the source system. |

|

timestamp |

|

Timestamp of the actual meter read. |

|

string |

|

uuid |

|

string |

||

|

double |

|

Load, unit: A |

|

double |

|

Current, unit: A |

|

double |

|

Power factor, between 0 and 1 |

|

double |

|

Volt ampere, unit: VA |

|

double |

|

Kilowatt, unit: kW |

|

double |

|

Voltage, unit: V |

Integrated format

Data are stored in the following format in the integration stage:

| Field | Type | Format |

|---|---|---|

|

String |

|

|

Double |

|

|

Timestamp |

|

|

String |

|

|

String |

|

|

String |

|

|

String |

|

|

String |

|

|

String (Partitioned) |

|

|

String (Partitioned) |

|

|

String (Partitioned) |

|

|

String (Partitioned) |

|

|

String (Partitioned) |

Troubleshooting

For troubleshooting common QuickStart issues, visit AWS Partner Solution General Information Guide and Troubleshooting CloudFormation.

Customer responsibility

After you deploy a Partner Solution, confirm that your resources and services are updated and configured—including any required patches—to meet your security and other needs. For more information, refer to the Shared Responsibility Model.

Feedback

To submit feature ideas and report bugs, use the Issues section of the GitHub repository for this Partner Solution. To submit code, refer to the Partner Solution Contributor’s Guide. To submit feedback on this deployment guide, use the following GitHub links:

Notices

This document is provided for informational purposes only. It represents current AWS product offerings and practices as of the date of issue of this document, which are subject to change without notice. Customers are responsible for making their own independent assessment of the information in this document and any use of AWS products or services, each of which is provided "as is" without warranty of any kind, whether expressed or implied. This document does not create any warranties, representations, contractual commitments, conditions, or assurances from AWS, its affiliates, suppliers, or licensors. The responsibilities and liabilities of AWS to its customers are controlled by AWS agreements, and this document is not part of, nor does it modify, any agreement between AWS and its customers.

The software included with this paper is licensed under the Apache License, version 2.0 (the "License"). You may not use this file except in compliance with the License. A copy of the License is located at https://aws.amazon.com/apache2.0/ or in the accompanying "license" file. This code is distributed on an "as is" basis, without warranties or conditions of any kind, either expressed or implied. Refer to the License for specific language governing permissions and limitations.